Synesthesia and Music Notation.

Over the centuries, humanity has developed powerful tools to transmit knowledge, techniques, expertise, and creativity. We have mastered the art of notating music, enabling its transcription onto paper and dissemination across cultures and generations. Technological advancements have further expanded our creative capacities and the sophistication of musical notation. However, for some individuals, there remains a significant barrier—not to creativity but to the transmission of compositions.

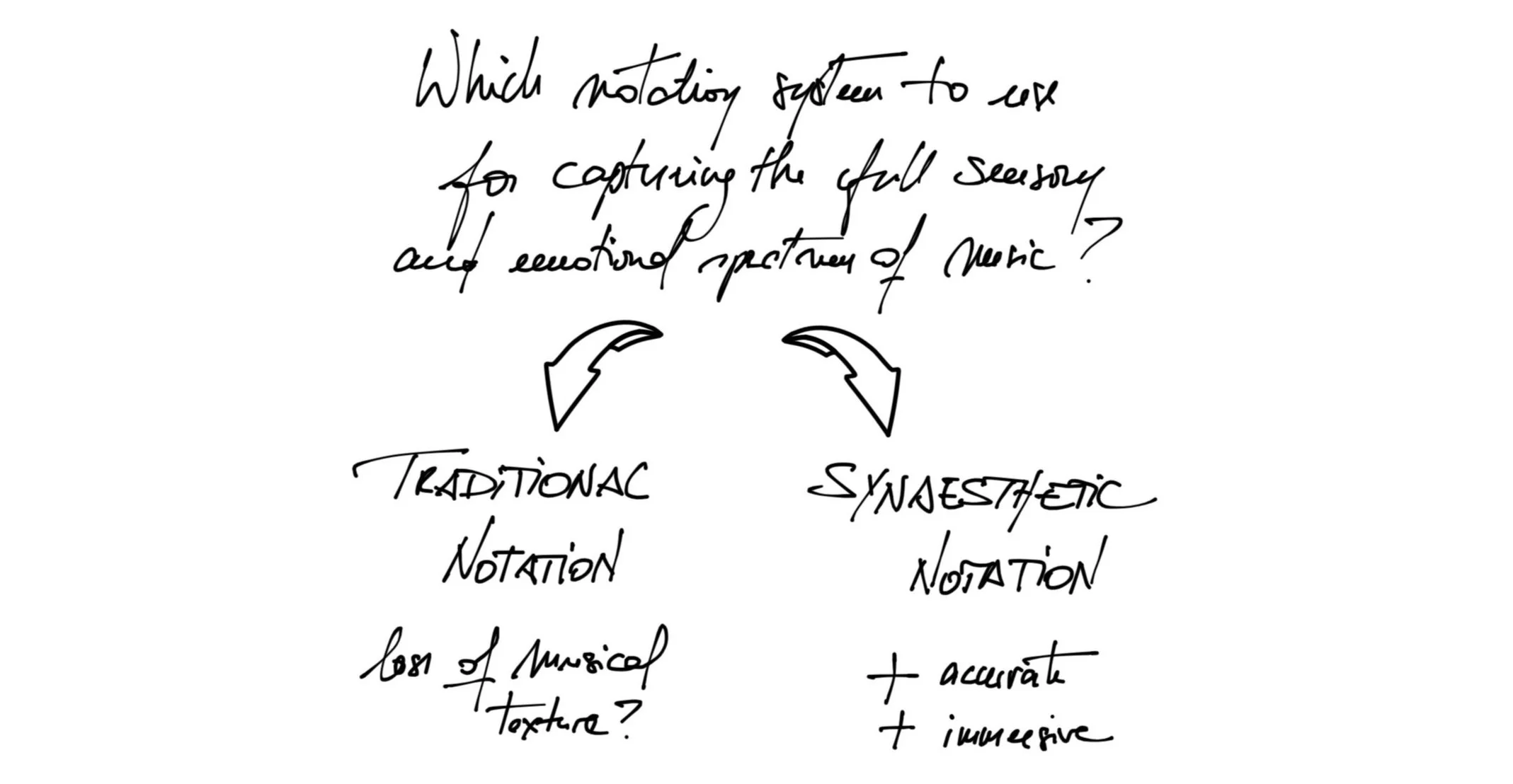

Composition may become less accessible for people like me with dyslexia and dyscalculia who might struggle with manipulating traditional musical notation. In my experience as an autistic individual with synaesthesia, conventional musical notation barely captures the experiential richness of musical composition. Moreover, reading this notation presents a learning barrier and later impacts the ability to play music. For me, a musical score is a monumental hurdle in learning a piece—I find it much more efficient to learn to play pieces of music by ear. This is not a question of virtuosity but rather a fundamental accessibility issue to musical content. Describing music with mere black-and-white notes and its daunting array of musical grammatical signs is akin to describing the taste of a pear without the ability to experience the fruit with all the human senses. While some have skillfully achieved this with talent and genius, it can come at the cost and loss of substance and energy for both the creator and the receiver.

Accessibility Challenges in Music Composition

I would like to propose a graphic design analogy to illustrate the limitations of traditional music notation in capturing the full sensory and emotional spectrum of music, especially from a synesthetic perspective. RGB and CMYK are two colour models that serve different purposes in visual media. The RGB (Red, Blue, Green) model is a three-chromatic colour space that uses the additive colour principle, where colours are created by adding light: it starts from black (no light) and adds red, green, and blue light to achieve the desired colour up to white, which is the combination of all three colours at full intensity. The CMYK (Cyan, Magenta, Yellow, Black) model is a four-chromatic colour space that operates with the subtractive colour principle: it begins with white (the colour of the paper) and adds layers of cyan, magenta, yellow, and black ink to block out specific colours and reduce the light that is reflected, creating the desired print colours. RGB is best suited for media where the light itself is used to create colours (like on a screen), whereas CMYK is best suited for situations where colour is produced by the absorption and reflection of light on a physical medium (like on printed materials). Printing colour content (e.g., a digital artwork such as a picture, a photograph or an illustration) from a screen onto paper thus requires significant computer conversion effort to reconcile both models and translate a three-dimensional system into a four-dimensional system. Still, translating RGB to CMYK often results in a loss of colour fidelity because the range of colours (gamut) in RGB doesn't perfectly overlap with that of CMYK; RGB and CMYK are two different operating systems that can “speak” to each other but at a cost and loss of computational energy and colour fidelity. Similarly, converting the rich, personal experience of music as perceived by a synesthete into traditional notation can result in a loss of the richness and depth of the experience, as some sensory nuances simply can't be captured or are lost in translation. To push the argument beyond the simple consideration of synaesthesia, traditional notation may only partially capture the multisensory, vivid, dynamic nature and genuine essence of experiencing music as humans.

Above all, musical composition is a full mind-body-system experience. Before notes can be put down on paper to construct musical pieces, the composer needs to imagine the sounds, organise them, make them “talk to each other”, and clash them together to see how different combinations and configurations of sounds react in the ear and throughout the body. Also, music and rhythm are first felt in the body (in my case, in the left shoulder) and then travel through mysterious pathways within cells and nervous networks, emerging as sound through finger snaps, foot taps, or other bodily rhythms. Similarly, sounds are spontaneously generated within the crevices of our brains, resonating with both our internal states (our emotions, waves of altered consciousness, ruminations, anticipations, day-dreaming and other multimodal mental imageries, etc.) and the external environment (what we hear, the natural and artificial soundscapes we’re immersed in daily, the music we listen to, etc.). My experience of creating music as a synaesthete is that before sounds become notes, they are forms, sensations, vibrations, shivers, echoes of colours and hues, and even entities with their personality traversing my nervous system and cognitive twists and turns, and eventually shaped before my mind’s eye as a fantastic visual show. Consequently, as a whole, my synaesthete mind-body system acts as a powerful resonator for composing, transmitting, and embodying (pure) musical expression. Below is a music score I am working on, tentatively rendering about 5% of the actual bodily experience.